Olga Subirós & Lev Manovich | Big Bang Data Revisited

Keywords: analog, big bang data, CCCB, Centre de Cultura Contemporania de Barcelona, CUNY Graduate Center, data visualization, datafication, Digital, Lev Manovich, Olga Subiros

A visual essay and exchange between curator Olga Subirós and scholar Lev Manovich.

Big Bang Data Revisited

“After the novel and subsequently cinema privileged narrative

as the key form of cultural expression of the modern age, the computer

age introduces its correlate — database. But it is also appropriate

that we would want to develop poetics, aesthetics,

and ethics of this database.”

– Lev Manovich

‘The Language of New Media’. Cambridge: MIT Press, 2001

In May 2014 the Centre de Cultura Contemporània De Barcelona (CCCB) unveiled Big Bang Data, a touring exhibition using various projects to explore the recent emergence of the database as a socio-political framework. The exhibition asks how the “datafication of the world” is transforming our society—from scientific study, government agencies, businesses, and arts and culture. After five months in Barcelona, it traveled to Fundación Telefónica in Madrid (where it is currently on view); then to Buenos Aires, London, and Singapore. Curators of Big Bang Data Olga Subirós and José Luis de Vicente drew on centuries of theory about information and computation, with special attention to the recent expansion of digital storage and its attendant capabilities. The work of media theorist Lev Manovich was central to the investigation. An e-mail exchange organized with Olga Subirós and Lev Manovich provides a tour of some of the most intriguing data visualizations and exploration projects from the last several years. The result is a visual essay mixed with provocations, curiosities, and observations that raises new questions and provides directions for additional research in the field of cultural analytics, data visualization, and the study of societies awash in data.

What motivated the decision to feature Lev Manovich’s quote as part of the Big Bang Data exhibition?

Olga Subirós: The datafication of the world, far beyond digitization, is a process that is as decisive for the 21st century as electrification was for the 19th.

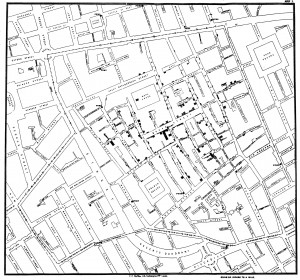

It affects cultural expression such contemporary art but also design, urbanism, science, humanities studies and even soccer. The show is about data as raw material: from Dr. Snow to Snowden – that is, Dr. Snow’s cholera map in 1854 to Edward Snowden’s revelations in 2013. Data is evolving in terms of production, quantity (massive data produced by social and sensor networks) and capacity (smaller and cheaper storage devices).

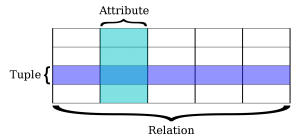

Lev Manovich: Database form, in the sense of a catalog of media objects is certainly important today (I wrote about it first in 1988) – witness the enormous catalogs of amazon.com and ebay.com, millions of songs on music streaming services like Spotify and Rdio, or Wikipedia. But also, a database – and in many cases, simply a simple data table (organized like an Excel spreadsheet) – is central to “big data” society in another way.

Our society uses statistical and computational data analysis (i.e., as “data mining” and “machine learning”) to extract value from large datasets, make decisions, automate processes and provide user services such as online search and recommendations.

To do that, all kinds of information about the world and people gets organized into essentially a massive table – typically each row represents one object, and the columns contain properties of the objects. All key data analysis techniques such as classification, regression, clustering, etc. work on this data structure.

OS: Lately Lev has engaged in a non-corporate way to extract value from large datasets with the Selfiecity project. Another example is your last project, On Broadway, which, in the project’s words “proposes a new visual metaphor for thinking about the city: a vertical stack of image and data layers.”

Your projects analyze and visualize big cultural data (Instagram, Twitter, etc) but the results go beyond. I am specially interested in how, from your work there emerges a new visual metaphor for thinking about ourselves and the city. I guess readers would like to know what it’s like, working with a large database, both the creative process and the technological issues that arise.

The CUNY Graduate Center in New York is opening a Center for Digital Scholarship and Visualization, where Prof. Manovich is a faculty member. One report described it as “surfacing insights from datasets that museums and other cultural institutions have provided.” Might we challenge the “inevitability” of cultural analytics? One “quote” from the report (wrongly attributed to a faculty member) stuck out to me because I think it revealed some of the ideological implications of cultural analytics: “They [museums] have these amazing cultural riches, and they’re not doing much with it.” Doesn’t this quote too easily assume that non-digitized and algorithmically sorted cultural artifacts are somehow being wasted, as if to say that all objects have a natural need to be run through platforms of optimization and analysis?

LM: I think that this particular coverage of our new initiative and ideas did not represent them correctly. We all love museums and they are doing great job in creating narratives and experiences using their collections – so obviously we did not want to say “they’re not doing much with it.” And while I believe that cultural analytics can reveal interesting new patterns in the history of art and in museum collection, certainly it does not happen automatically every time. I see our work as supplementing other methods of scholarship and curating, not replacing it.

Interesting. I think that is in itself relevant to the discussion, especially since the press is predisposed to hype certain initiatives, sometimes to dubious lengths. I asked about the particular quote since it seemed almost too over the top. How do we separate mere marketing speak from what Olga and José refer to as a “fundamental transition in the history of knowledge”?

Of all of the turning points over the last century what is the most important historical moment, event, innovation, or tool that provided the most significant turning point in our datalogical existence? In other words, how precise can we be about identifying the catalyst to the “information explosion”?

OS: In our Big Bang Data exhibition we had a massive red dot with an historical momentum: “2002” as a kind of year zero for before and after analog capacity. This came from the research article “The World’s Technological Capacity to Store, Communicate, and Compute Information” by Martin Hilbert and Priscila López published at Science Magazine in 2011.

To summarize with one of their quotes: “2002 could be considered the beginning of the digital age, the year in which worldwide digital storage capacity overtook total analog capacity. As of 2007, almost 94 percent of our memory is in digital form”.

Many artists today are increasingly confronted by the temptation or necessity to engage with fields typically associated with science and engineering: broadly speaking, “technology.” One of the compelling features of Big Bang Data is the overcoming of this traditional division between art and science, recognizing instead the inescapability of networked culture and the way new art engages with systems theory.

OS: I’m not so comfortable with the expression “new art”. I do see increasingly artists approaching science and scientists. Big Bang Data shows the work of Heather Dewey-Hagborg, Stranger Visions, and she has recently presented Invisible in Transmediale 2015. I’m very much interested in how she works at the intersection of art and science. At the Big Bang Data exhibition we also show many works that use scientific and real time data as the raw material to produce artistic works, which is absolutely key and contemporary.

Do you think traditional notions of beauty and aesthetic contemplation are relevant to the context of Big Bang Data, or do they belong elsewhere?

LM: Contemporary data visualizations often follow very traditional notions of beauty. For example, a large proportion of network visualizations present networks as highly symmetrical structures (use and filter by “method” to see many examples of this). Given that modernism was fighting against symmetry, such visualizations are more classical than modern.

But data visualization is also “classical” in another more important way. Most of the basic visualization techniques used today – bar, line or radial plots, histograms, scatter plots – were invented at the end of the 18th and early 19th centuries. While of course some new types were also introduced in 1970s-1990s when computers started to be used for visualization, the great majority of visualizations we see today use the classical types from 200 years ago. But of course, the “data culture” 200 years ago was very different: data sets were quite small, the graphs were made by hand, and the modern “discipline” society which heavily relies on statistics and data analysis to keep track of its subjects was only getting started. If today our society wants to extract knowledge from “big data,” why are we still using the same techniques from 200 years ago? I think that this is very strange. Probably we have not invented new techniques appropriate for understanding data on the contemporary scale.

OS: Just take a look at these three works and think if beauty is relevant or not. Big Bang Data shows Telepresent water, a piece of David Bowen (see vimeo above). This work takes real time data from a scientific buoy station in the middle of the ocean. The wave intensity and frequency is scaled and transferred to the mechanical grid structure resulting in a simulation of the physical effects caused by the movement of water from this distant location.

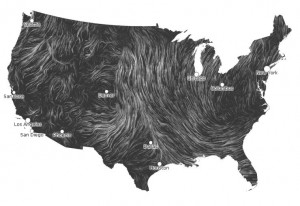

Big Bang Data also features Windmap (using real time data flow from the National Digital Forecast Database) by Fernanda Viegas and Martin Wattenberg.

Has the contemporary art field broken with the past in an irreversible way, just as the digital era has revolutionized analog modes of storage and archiving?

OS: Digital relates also to fingers (digitus in latin is finger). Digital had always its B-side need of tactile. I see the need of the tactile experience growing. And not only the tactile contact of human to object, but human to human. When I think of the last Documenta I think of the work by Tino Sehgal, This Variation.

Regarding modes of storage and archiving, of course they have to deal with their obsolescence (Moore’s law). But new ways of storing data are emerging, such as the recent achievement by Harvard scientists that stored 700 terabytes into a single gram of DNA—still unaffordable but promising.

LM: Contemporary art is using the set of techniques and concepts defined in the 1960s. All its moves – installations, performances, video, ironic attitude, conceptualism, disregard for media specificity – were refined by 1970. If a field has not updated its methods in 40 years, how can you expect to offer anything exciting? Compare this stagnation to rapid developments in the sciences, big changes in social norms, globalization, etc. Often I feel that art today is the least creative of all human fields.

But what is equally strange is that despite its appearance of being always new and constantly changing, digital media is also largely based on ideas from the 1960s and early 1970s. By the mid 1970s, the key concepts which underlie contemporary use of computers were all established – networks, UNIX operating system, SQL (most widely used database language), GUI and the use of a computer as a universal media machine for creating and reading/viewing/watching/listening many media (see my 2013 book “Software Takes Command” about the last point.) But in the case of digital media, there have been also plenty of other innovations of course which at least until recently were more interesting to follow than “contemporary art.”

However, in the last few years, as big social networks became the real mass media of our society, catering to everybody, the digital space for me became less exciting than, lets say, 10 years ago. In 10 years, what used to be the equivalent of an “avant-garde studio” became the equivalent of network TV.

However, things don’t end with video games, social networks, 3D printers and other “digital mass culture.” “Big data” is a very large social and cultural development which is only getting started, so this for me currently is still the most interesting thing. And of course, in humanities, curating and cultural criticism, big data era is still a way ahead. This is a very fun area to work in now.

If data is reducible to the quantifiable, and artists and their work can be mapped according to affinities based on different attributes, then does a curator qualitatively turn into something like an algorithm for artworks?

OS: Jajaja! That’s a good one. Curators replaced by algorithms, just like journalists, television series scriptwriters, stock market brokers, etc… Why not? How we can assume that it is not going to happen? How do you know you that you are not talking to a bot right now? We live in a moment where we have to constantly prove that we are humans, therefore we might reach to the conclusion that there are bots everywhere by default.

More Information about Big Bang Data http://bigbangdata.cccb.org/en/

More Information about the CUNY Graduate Center Digital Initiatives http://gcdi.commons.gc.cuny.edu/

John Snow – Published by C.F. Cheffins, Lith, Southhampton Buildings, London, England, 1854 in Snow, John. On the Mode of Communication of Cholera, 2nd Ed, John Churchill, New Burlington Street, London, England, 1855.

detail of Zeus’ Affairs, 2012 by Ilaria Pagin, Viviana Ferro, Elisa Zamarian available at http://www.visualcomplexity.com/vc/project_details.cfm?id=835&index=835&domain=

Big Bang Data

Big Bang Data

Big Bang Data

Big Bang Data

Big Bang Data

Big Bang Data